Earning Trust

Earning Trust: Unlocking AI Adoption for Australians

It is well established that Australians have low trust in artificial intelligence (AI). This research spotlight moves the conversation forward, asking what it would take to increase Australians’ trust in AI.

Too often, the AI conversation is framed as a trade-off: move fast and innovate, or slow down and regulate. This framing misses the point.

For most Australians, the issue is not speed – it is trust. Trust that AI will be used responsibly. Trust that there are rules, safeguards and accountability when it matters. And trust that they have genuine choice and agency in how these technologies shape their lives. This trust deficit undermines discerning adoption and, if it persists, will likely prevent the benefits of AI from being evenly distributed.

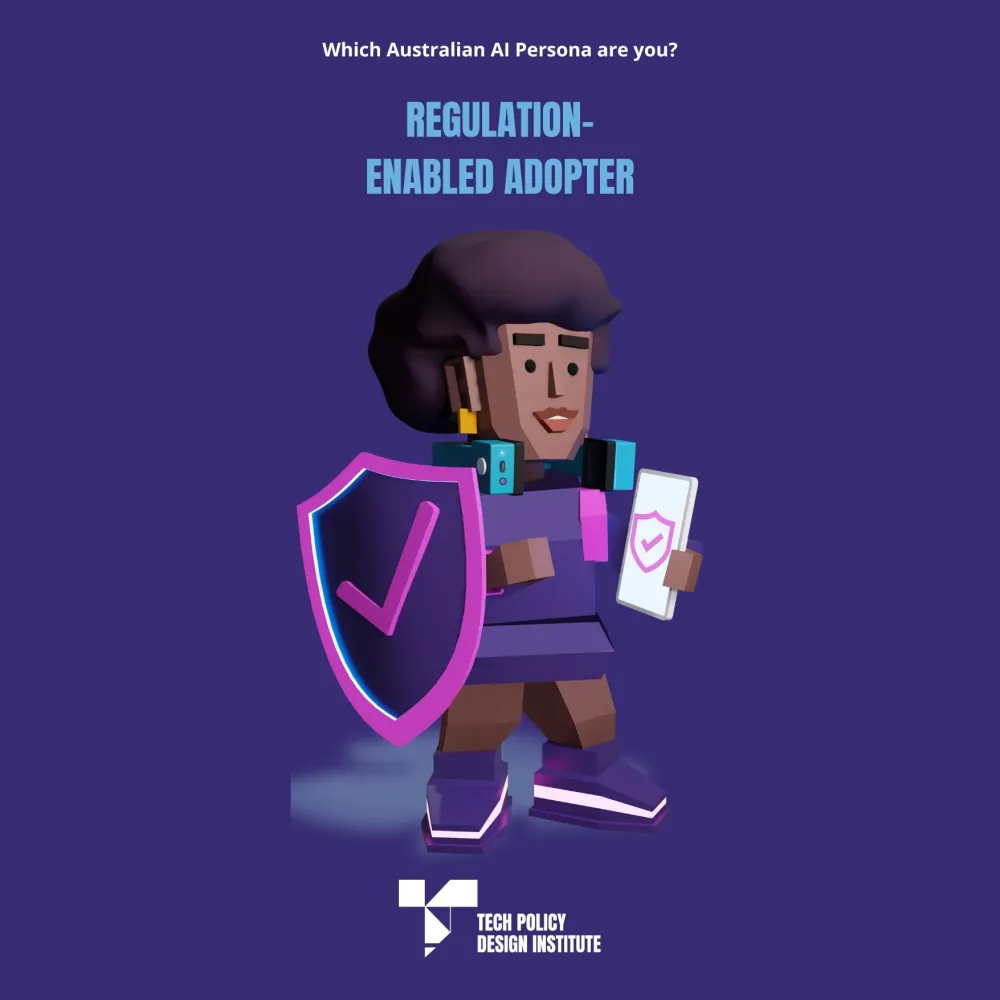

Our research reveals 85% of Australians support government regulation of AI, and 70% say they would embrace AI more if strong rules were in place – signalling that regulation is not a barrier to adoption and productivity, but the condition for it.

Despite mainstream uptake of AI tools and growing integration across everyday services, Australians have low trust in AI. This is well established in existing research: Australia ranks 42nd out of 47 countries for trust in AI.

Australia needs to move beyond quantifying its AI trust problem and identify levers for change. This foundational policy question demands an evidence-based answer: can well-designed regulation increase public trust in AI – and with it, discerning adoption?

To provide this evidence base, TPDi commissioned a nationally representative survey of Australians and focus groups to examine public attitudes toward AI, trust, and the role of regulation. The findings have clear implications for regulation in Australia’s implementation of the National AI Plan.

The mandate is overwhelming. Australians want AI regulation, and that regulation would build trust. Most Australians (85%) support government action on AI regulation, as opposed to leaving industry to self-regulate. Of those surveyed, 70% say that government AI regulation would also make them more comfortable with AI use in Australian society. Only 2% oppose any new regulation. This is not a marginal or contested finding. It is a decisive public mandate to act.

Public demand for regulation is based on knowledge, not ignorance. Demographics such as income, gender and education have no statistically significant effect on support for regulation. What matters is Australians’ awareness of specific AI risks. Exposure to risk information nearly triples the likelihood that someone views regulation as a prerequisite for trust and adoption. The public’s call for action is not based on ignorance. Those who understand AI and its risks are the biggest supporters of regulation.

Context is everything. Responses to case studies reveal that trust in AI and the value of regulation shift dramatically depending on the sector. In law enforcement – where AI can be part of delivering a public good – regulation and trust reinforce each other. Conversely, for ‘consumer-focused’ AI tools (like generative AI), trust and regulation act as substitutes, with practical utility substituting an individuals’ need for regulation, especially for confident users. Australians don’t support standalone one-size-fits-all regulation – like an AI Act. Australians favour either sector-specific laws or a hybrid of sector-specific and an AI Act.

Privacy and control of information, workers’ rights and misinformation are the issues of most concern. When asked in the survey what specific issues AI regulation should address, Australians identified protecting and retaining control over their personal information as their number one priority, with a relative importance index of 75 out of a possible 100. This is followed by protecting Australian jobs and the rights of workers (64) and preventing the spread of AI generated misinformation (59). AI misuse and illegal activity, deepfakes, fraud, and harmful use of AI, especially regarding children, were other prominent concerns raised in the focus groups.

⬇️ Download the Research Spotlight

⬇️ Download the Spotlight Summary

Our research reveals that Australia has 4 distinct AI personas.

Building on TPDi’s Tech Policy Philosophies, this research reveals 4 segments of the Australian population as distinct AI personas. Aggregate statistics can signal a mandate for action, but they do not explain how to communicate policy effectively across the population. A persona-based approach helps bridge this gap.

This research identified 4 distinct population segments:

- regulation-enabled adopters (45%) are the persuadable majority – open to AI but waiting for clear safeguards

- tech champions (28%) see regulation as an economic enabler

- entrenched sceptics (15%) want protection, not AI promotion

- self-assured adopters (12%) prefer to navigate AI on their own terms.

Which Australian AI persona most resonates with you?

This Tech Policy Spotlight was made possible thanks to the sponsorship of the Tech Policy Design Fund. The national survey and focus groups were supported by the Australian Computer Society. Learn more about TPDi’s blind trust funding model and how this protects and promotes our independence.

⬇️ Download the Research Spotlight | ⬇️ Download the Spotlight Summary